Can Innovation Curb AI’s Hunger for Power?

Nvidia says its machine-learning chips have become 45,000 times more energy efficient, with further improvements in the pipeline

Artificial intelligence is known for its seemingly insatiable appetite for energy. But some tech leaders and analysts are questioning the extent of AI’s footprint going forward, saying innovations in the sector could help offset rising energy demand.

There is certainly no shortage of forecasts detailing the ominous rise in AI’s energy consumption. One commonly-cited report by Climate Action against Disinformation, an association of environmental groups, suggests that AI could drive up global emissions by 80%.

Another estimate by researcher Alex de Vries—which he described as a worst-case scenario—suggests that Google’s AI alone could eventually rack up as much annual electricity demand as the country of Ireland.

Such predictions present a substantial challenge for the relationship between computing and the climate in the coming years.

However, others see reason for optimism. In a Salesforce survey of about 500 corporate sustainability professionals, published Wednesday, nearly half were concerned about AI’s potential negative impacts on sustainability efforts. Meanwhile, almost 60% thought the benefits of AI would offset its risks in addressing the climate crisis.

Microsoft founder Bill Gates recently weighed in on the subject, urging governments not to go “overboard” on concerns about AI’s energy footprint, and suggesting that the technology could actually drive a reduction in global energy demands.

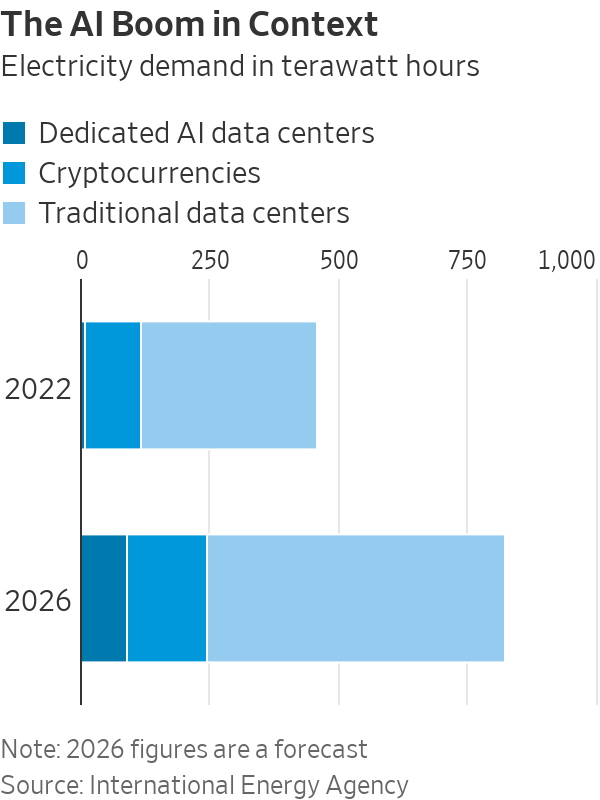

Putting the AI boom in context

The rise of AI should be considered in a broader perspective, according to Charles Boakye, U.S. sustainability analyst at Jefferies. He noted that relative to other industries, the technology still makes up only a fraction of global power demands.

“Data centers—the engines powering everything from AI to traditional computing to cryptocurrency—currently account for about 2% of global electricity consumption. Of this, AI accounts for roughly 0.5%,” Boakye said.

In terms of emissions, statistics last year from the International Energy Agency showed in total, data centers and transmission networks are responsible for 1% of energy-related greenhouse gases. For comparison, the oil-and-gas industry contributed just under 15% of emissions in 2022.

Looking ahead, the intergovernmental organisation said that by 2026, demand for AI is expected to increase ten fold compared with 2023. Even so, IEA data showed that it will still only account for roughly an eighth of total data-center electricity consumption.

“So in terms of AI demand, we’re talking about a small piece of a small piece,” Boakye said, noting that other areas of electrification, such as electric mobility, traditional data center growth and the industrial transition, will ask much greater questions of the power sector.

Charting efficiency trends

Demand is difficult to predict. But recent trends show that in practice, a rise in demand for computing power rarely correlates one for one with a rise in energy consumption, according to climate researcher Jonathan Koomey.

“Between 2010 and 2018, global data centres saw a 550% increase in compute instances and a 2,500% increase in storage capacity. This compares with just a 6% rise in electricity use,” he said.

Koomey, previously a visiting professor at Stanford, Yale and Berkeley, is best known for his work studying long-term trends in the energy-efficiency—now known as Koomey’s Law—highlighting the propensity of computing technologies to become more efficient over time.

AI may be a different animal, with some estimates suggesting large models such as ChatGPT use 10 times more energy than a Google search. But this is unsurprising for a relatively new technology, Koomey noted. In most areas of computing, energy demand tends to spike before levelling off as efficiencies gather pace, he said, noting that forecasts based on this inflection point will often be misguided.

Koomey cited a brief moment in the mid 1990s when internet data flows doubled every hundred days—a statistic that led to overinvestment in networks in the following years and 97% of fibre capacity sitting unused.

Similar efficiency trends can be seen in the development of AI, Jefferies analyst Boakye said. Google’s new TPU processors, for example, are more than 67% more efficient than in 2022, and the energy used to train OpenAI’s ChatGPT models has gone down by 350 times since 2016, he noted.

“It’s in their best interest, and in the interest of their business models, to increase that efficiency,” the analyst said.

Going for gold in the AI Olympics

If any company will have a say in the future of AI, it’s Nvidia . The U.S. technology company designs roughly 80% of the world’s specialized AI chips. Its next-generation GPUs, known as Blackwell, are touted to be 25 times more energy efficient than current iteration Hopper, while offering 30 times more computing power. Blackwell chips are slated for release later this year.

So far, the progress appears consistent, with Hopper 25 to 30 times more efficient than Nvidia’s previous generation of chips. Overall, the company said it has experienced a 45,000 times improvement in GPU energy efficiency over the last eight years.

Nvidia added that software optimisation also plays a big role in increasing the energy efficiency of its products.

“Once a platform has been launched, we will make it more efficient in a single year,” the company said. In the year following its launch in 2022, Hopper became two times more efficient after taking part in MLPerf, otherwise known as the Olympics of AI, in which tech companies compete and collaborate to drive improvements in the speed and efficiency of their models.

Part of this optimization involves taking large, energy-intensive AI models—such as ChatGPT—and refining them to perform more specific tasks. On July 18, for example, OpenAI launched a smaller, smarter and more energy efficient version of its previous GPT model, known as GPT-4o mini. Koomey sees these more “lightweight” models driving demand in the future .

“I’m not convinced large-scale AI has a good business model at this point, despite them driving all the investment. The slam dunk machine learning usually involves smaller, more efficient models, focused on a specific task. For me, this is where most of the business value lies,” he said.

Training and education will be essential for getting a more targeted and efficient experience with AI, helping to narrow the gap between businesses and their sustainability goals, said Suzanne DiBianca, Salesforce’s chief impact officer.

According to Nvidia, another way of alleviating AI’s impact is directing workloads—particularly the more energy-intensive jobs of training AI models—to regions with more abundant resources, ideally renewables which are set to keep a lid on electricity emissions in the coming years.

One company making strides in this space is NexGen Cloud, which builds renewable-powered data centers in areas with untapped energy resources.

According to co-founder and CSO Youlian Tzanev, a large portion of AI workloads don’t need to be performed close to traditional logistical hubs like London or New York, and can be powered from more remote areas in countries like Canada and Norway with excess hydropower.

“We have significantly more power than people believe. The power just isn’t reaching the grid in many cases and is going to waste, and so that is where we focus our efforts,” Tzanev said.

In the U.S., Crusoe Energy Systems offers another example of startups finding innovative ways to power the AI boom. Crusoe’s modular data centers are designed to run on excess natural gas produced at oil wells, achieving a 99.9% methane reduction in the process.

Common forecasting pitfalls

Projecting an outlook for AI’s energy demands is far from straightforward. In its midyear electricity update, the IEA noted that estimates exhibit a wide range of uncertainty, with some analyses following overly “simplistic” extrapolations.

For instance, the organization said certain studies make the mistake of assuming data center operators build all the facilities for which they apply to utilities. Given that several applications can be made for each new data center, this can lead to a multiplication of estimates.

Within data centers themselves, it is tempting for forecasters to imagine computers working flat out around the clock, Koomey said. In practice, GPUs usually operate on much less than their full power capacity, he added.

Based on modeling carried out by Nvidia, GPUs on average tend to run on less than 70% of their potential power. One particular function, known as Multi-Instance GPU, enables workloads—and therefore energy consumption—to be split into seven distinct components, with each able to function independently.

In addition, the company noted the role of substitution effects, in which traditional computing workloads are transferred onto AI platforms and subsequently performed more efficiently—an aspect that can be easily overlooked.

Forecasts can also conflate local data-centre developments with broader energy demands , Koomey added, noting that the most common estimates for electricity demand come from local utility companies.

In the U.S. as a whole, electricity use actually fell in 2023 compared with the previous year, according to the U.S. Energy Information Administration. In March 2024—the most recent month of available data—total demand reached 306 billion kilowatt-hours, down from 317 billion kWh in the year-prior period.

“I worry that people are jumping on the explosive demand-growth train before really understanding what’s going on,” Koomey said. “If you cluster data centers in certain places you’re going to see some local power constraints, but that doesn’t necessarily mean that AI will be a key driver of electricity use more broadly.”

Corrections & Amplifications undefined Nvidia said it has experienced a 45,000 times improvement in GPU energy efficiency over the last eight years. An earlier version of this article incorrectly said the company developed its first GPU eight years ago. (Corrected on July 24)

Copyright 2020, Dow Jones & Company, Inc. All Rights Reserved Worldwide. LEARN MORE

Copyright 2020, Dow Jones & Company, Inc. All Rights Reserved Worldwide. LEARN MORE

From elevated skincare to handcrafted home pieces, this year’s most thoughtful gifts go beyond the expected.

A haven for hedge-fund titans and Hollywood grandees, Greenwich is one of the world’s most expensive residential enclaves, where eye-watering prices meet unapologetic grandeur.

The lunar flyby would be the deepest humans have traveled in space in decades.

It’s go time for the highest-stakes mission at NASA in more than 50 years.

On April 1, the agency is set to launch four astronauts around the moon, the deepest human spaceflight since the final Apollo lunar landing in 1972.

The launch window for Artemis II , as the mission is called, opens at 6:24 p.m. ET.

National Aeronautics and Space Administration teams have been preparing the vehicles to depart from Florida’s Kennedy Space Center on the planned roughly 10-day trip. Crew members have trained for years for this moment.

Reid Wiseman, the NASA astronaut serving as mission commander, said he doesn’t fear taking the voyage. A widower, he does worry at times about what he is putting his daughters through.

“I could have a very comfortable life for them,” Wiseman said in an interview last September.

“But I’m also a human, and I see the spirit in their eyes that is burning in my soul too. And so we’ve just got to never stop going.”

Wiseman’s crewmates on Artemis II are NASA’s Victor Glover and Christina Koch, as well as Canadian Space Agency astronaut Jeremy Hansen.

What are the goals for Artemis II?

The biggest one: Safely fly the crew on vehicles that have never carried astronauts before.

The towering Space Launch System rocket has the job of lofting a vehicle called Orion into space and on its way to the moon.

Orion is designed to carry the crew around the moon and back. Myriad systems on the ship—life support, communications, navigation—will be tested with the astronauts on board.

SLS and Orion don’t have much flight experience. The vehicles last flew in 2022, when the agency completed its uncrewed Artemis I mission .

How is the mission expected to unfold?

Artemis II will begin when SLS takes off from a launchpad in Florida with Orion stacked on top of it.

The so-called upper stage of SLS will later separate from the main part of the rocket with Orion attached, and use its engine to set up the latter vehicle for a push to the moon.

After Orion separates from the upper stage, it will conduct what is called a translunar injection—the engine firing that commits Orion to soaring out to the moon. It will fly to the moon over the course of a few days and travel around its far side.

Orion will face a tough return home after speeding through space. As it hits Earth’s atmosphere, Orion will be flying at 25,000 miles an hour and face temperatures of 5,000 degrees as it slows down. The capsule is designed to land under parachutes in the Pacific Ocean, not far from San Diego.

Is it possible Artemis II will be delayed?

Yes.

For safety reasons, the agency won’t launch if certain tough weather conditions roll through the Cape Canaveral, Fla., area. Delays caused by technical problems are possible, too. NASA has other dates identified for the mission if it doesn’t begin April 1.

Who are the astronauts flying on Artemis II?

The crew will be led by Wiseman, a retired Navy pilot who completed military deployments before joining NASA’s astronaut corps. He traveled to the International Space Station in 2014.

Two other astronauts will represent NASA during the mission: Glover, an experienced Navy pilot, and Koch, who began her career as an electrical engineer for the agency and once spent a year at a research station in the South Pole. Both have traveled to the space station before.

Hansen is a military pilot who joined Canada’s astronaut corps in 2009. He will be making his first trip to space.

Koch’s participation in Artemis II will mark the first time a woman has flown beyond orbits near Earth. Glover and Hansen will be the first African-American and non-American astronauts, respectively, to do the same.

What will the astronauts do during the flight?

The astronauts will evaluate how Orion flies, practice emergency procedures and capture images of the far side of the moon for scientific and exploration purposes (they may become the first humans to see parts of the far side of the lunar surface). Health-tracking projects of the astronauts are designed to inform future missions.

Those efforts will play out in Orion’s crew module, which has about two minivans worth of living area.

On board, the astronauts will spend about 30 minutes a day exercising, using a device that allows them to do dead lifts, rowing and more. Sleep will come in eight-hour stretches in hammocks.

There is a custom-made warmer for meals, with beef brisket and veggie quiche on the menu.

Each astronaut is permitted two flavored beverages a day, including coffee. The crew will hold one hourlong shared meal each day.

The Universal Waste Management System—that’s the toilet—uses air flow to pull fluid and solid waste away into containers.

What happens after Artemis II?

Assuming it goes well, NASA will march on to Artemis III, scheduled for next year. During that operation, NASA plans to launch Orion with crew members on board and have the ship practice docking with lunar-lander vehicles that Elon Musk’s SpaceX and Jeff Bezos’ Blue Origin have been developing. The rendezvous operations will occur relatively close to Earth.

NASA hopes that its contractors and the agency itself are ready to attempt one or more lunar landing missions in 2028. Many current and former spaceflight officials are skeptical that timeline is feasible.

From gorilla encounters in Uganda to a reimagined Okavango retreat, Abercrombie & Kent elevates its African journeys with two spectacular lodge transformations.

From citrus oils to warming spices, the classic G&T is being reimagined at home as a more thoughtful, seasonal ritual for modern entertaining.